Sensor Stations

Thermal

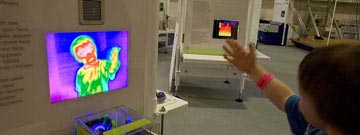

See a face that only a thermal-imaging mother could love at roboworld®!

How do rescue robots see through thick smoke in a burning building? They use thermal imaging cameras with sensors that detect the heat energy an object gives off. Software then shows that energy as different colors on a screen. This is how a robot might see you! The warm parts of your face, like your mouth or cheeks, appear red because they emit more heat than a colder part of your face, like your nose, which appears blue. Thermal imaging also is used in robots that detect heat leaks in hazardous or remote environments like nuclear facilities or factory ductwork.

Learn More

Thermal imaging robot finds termites ![]()

Color

Roll a ball and play a colorful tune at roboworld®!

Did you know that 80% of the information our brains take in comes from our eyes? Color is an important part of that – from red stop signs to brown bananas (yuck!). Robots use sensors to efficiently sort products based on their color as they move along an assembly line.

When a ball rolls down the ramp at this exhibit, the camera underneath snaps its picture. That digital picture is made up of thousands of dots of color called pixels. A sensor identifies the exact color of each of the millions of pixels based on a color chart. The computer then determines which color is most common among the pixels, shows you the name of the color and plays a note.

Object Tracking

Have you ever tried to keep your eye on a fly as it buzzed around a room?

Object tracking systems allow a robot to identify and track something that is a specific shape or, as with this robot, color. When you push a color button, the robot looks for that color. Once its sensors lock on to the color, it adjusts its position to keep that color in its view. This robot is programmed to track these color balls, but it might also track the colors on your shirt!

Object tracking systems are used in research, security, and manufacturing. A robot delivering supplies in a hospital might be programmed to follow a white line on the floor to find its way. A manufacturing robot might use sensors to sort square objects from round ones on an assembly line. Advanced systems can even recognize human faces! They look for a combination of shapes in a particular pattern, such as two circles (eyes) above a triangle (nose) above an oval (mouth).

Local Connection

The camera system used at roboworld®, the CMUcam3 was developed by a team at Carnegie Mellon University. It’s an inexpensive, small, highly-programmable system that has become a standard for robotic students and hobbyists around the world.

Lidar

What do bats and robots have in common? They can both use time to measure distance! Some mobile robots use lidar, a type of long-range distance sensor, to help them get around. A lidar sends out laser light and measures the time it takes that light to bounce off an object and return to the sensor. This “reflection time” is used to determine the distance between the object and the sensor. Lidar sensors perform well at long ranges and are typically used to detect objects that are hundreds of feet or even miles away. Because of their long-range performance, lidars are often used in self-driving robotic vehicles to “see” the road and detect obstacles while driving.

Local Connection

A team at Carnegie Mellon University developed Boss, an autonomous robotic vehicle that makes extensive use of lidar to help it navigate city streets. Boss won the 2007 DARPA (Defense Advanced Research Projects Agency) Urban Challenge by successfully navigating a 60-mile road course without a human behind the wheel or using a remote control. The robotic vehicle stayed on the road, obeyed traffic rules, and avoided other cars with the help of lidar, among other sensor systems.

Distance

No tape measure? No problem! Use the sonic range finder at roboworld® instead.

Robots use proximity sensors to detect their distance from an object. This helps them to avoid things like walls, or find things like a rock on Mars. The sensors here are sonic range finders. They work by bouncing sound off an object and measuring the time it takes the sound to return to the sensor. Most distance sensors use this “reflection time” method. Depending on the material or location of the object, different sensors are used. Sonar (sound waves) works best under water while lidar (laser light) and radar (radio waves) work well in the air and over long distances. These sonic sensors work best over short distances (several inches or feet). Many animals like bats and dolphins use the same method to detect objects in the air and water.

Accelerometer

“Accelerometer” is a very big word for a very tiny sensor. At roboworld® you can use an accelerometer-controlled device to move a ball through the maze. It gives you the “feel” of acceleration!

How tiny is an accelerometer? The one in the Wii™ video-game controller is about the size of Lincoln’s nose on a penny! In digital cameras, accelerometers determine the orientation to display an image. In cars, they detect collisions and inflate airbags. And in video games, they sense a player’s controller moves. Robots use accelerometers to determine if they are traveling uphill, over uneven terrain, or if they have just hit an unexpected obstacle.

3-D Vision

Ever wonder what things look like to a robot? At roboworld®, you can use a track ball to rotate an image of yourself. It’s just like getting right inside the robot’s “head”!

Our brains combine the two slightly different images from each of our eyes to produce a 3-D image, allowing us to see depth. But a robot might see depth with only one ‘eye’! This 3-D sensor bounces beams of infrared light “invisible to human eyes” off objects in front of it, then measures the time it takes that light to be reflected back. By measuring the difference in how long it takes for the infrared light to hit each one of thousands of tiny pixel sensors (side by side in a grid only the size of a postage stamp!), the computer can create a moving 3-D image of the world around it. Like people, some robots can recognize shapes, colors, and moving objects on a video screen. But it’s difficult to detect depth from a flat image. Advanced depth sensors like this will allow better design of navigation and object detection systems for robots.

Force

Just like us, robots need to get a grip! Try to control a robot hand at roboworld®.

If a robot applies too little force to its gripper, the object it is trying to pick up could slip; too much force, and the thing is squashed. The solution: a force sensor located in the robot’s gripper allows it to apply the right amount of force every time. Force sensors are often used in manufacturing where products like car parts are grasped and moved with incredible precision. Did you know you have force sensors too? Nerves in our skin allow us to detect and adjust the force we exert on things we handle – from bowling balls to eggs. Our lightning-quick nervous systems also tell us when we need to grip things more tightly so they don’t slip. Robots, though, often have to be reprogrammed for each different thing they handle.

Radial Encoder

Not all robots use vision systems in their work. So how does a “blind” robot know where it is moving?

This rotational sensor reads light and dark sections of a disc to determine how much it – and any object it is attached to – has rotated. Each pie-shaped section of the disc has a different light and dark pattern, representing part of a 360° full rotation. By identifying the light-dark patterns, sensors determine how far the disc has moved. Rotational sensors can be used in a robot’s joints to track its position, or in its wheels to determine how far or fast it is moving. For instance, the pattern of dark-dark-dark-light (represented as 0-0-0-1) shows the disc has rotated between 22.5° and 45°. If the robot receives a 0-0-0-1 signal from the sensor, it knows its arm has rotated to that position. It’s like Twister® for robots!